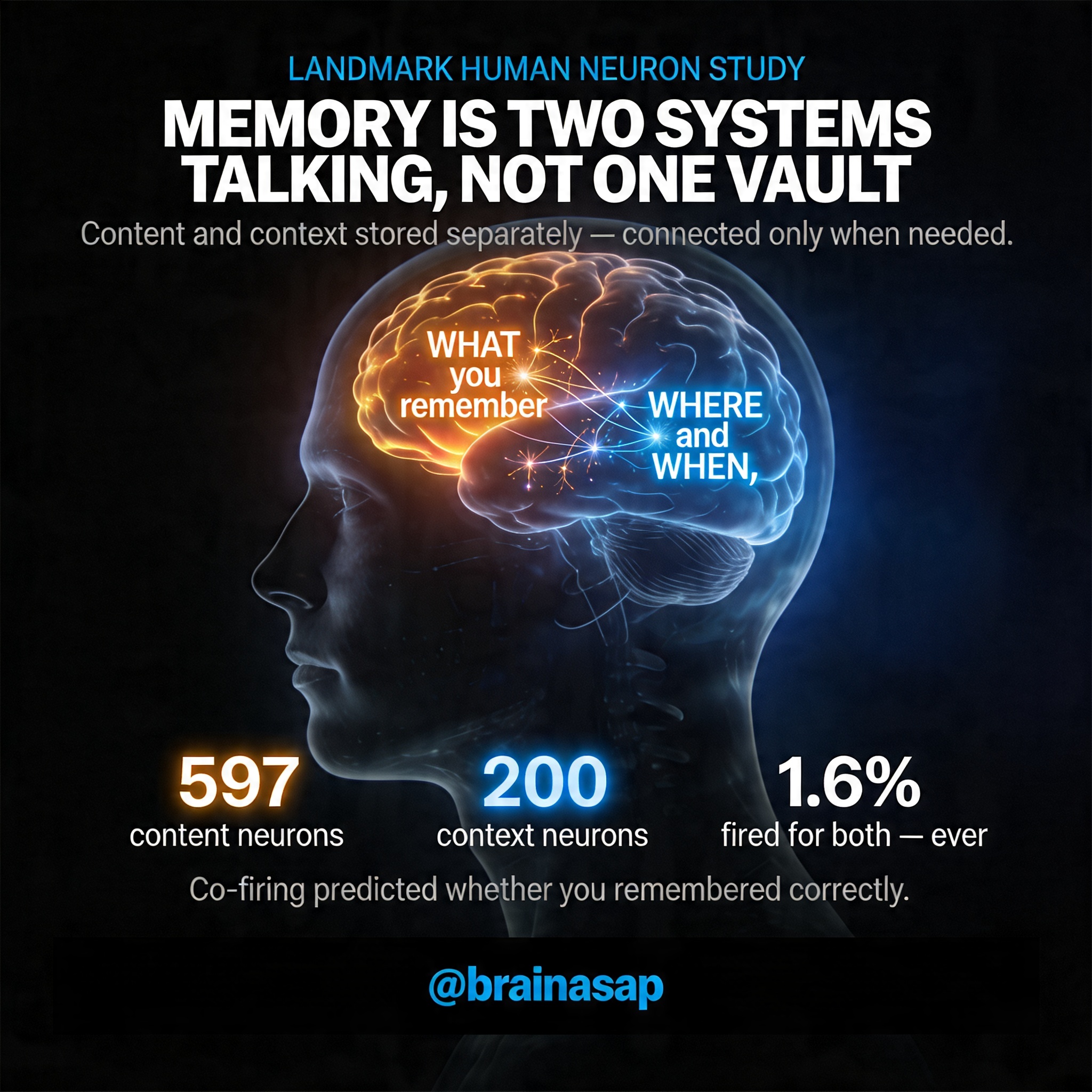

How the Brain Separates What We Remember from Why We Remember It

TL;DR: The brain stores content (what you remember) and context (when/where) in separate neural populations linked by real-time coordination, not pre-wired conjunctive cells—a design that trades speed for flexibility, allowing you to recognize a friend’s face across any setting or apply a principle learned once to infinitely new situations.

For forty years, neuroscientists thought memory was figured out. Single neurons in the rat brain fired for specific combinations: this location paired with that smell, this experience locked to that context. If that held in humans, your memories would be locked vaults—each moment stored with all its details permanently attached.

Yet you experience memory differently. You recognize your friend’s face across a thousand contexts. You apply a fact learned once in infinite new situations. You imagine scenarios you’ve never lived.

A landmark study recording directly from human neurons during surgery reveals why: the brain maintains content and context in separate neural populations linked on demand—a design that buys flexibility at the cost of constant, careful coordination.

Key Findings

- Items and context are encoded by largely separate neuronal populations: 597 neurons fired selectively for specific pictures, 200 for the comparison rule being applied, yet only 50 neurons (1.61%) encoded specific picture-context combinations—revealing that the brain avoids pre-coded, rigid memory pairs.

- Geographic specialization: Context neurons concentrate in the hippocampus and parahippocampal cortex, regions specialized for spatial and episodic information, while content neurons scatter more broadly—suggesting context is a specialized memory function.

- Co-firing predicts accuracy: When a picture neuron and context neuron fired together, the subject was significantly more likely to answer correctly (p < 0.001), tying neural coordination directly to memory performance.

- Real-time synaptic rewiring: Within minutes, stimulus neurons in the entorhinal cortex began predicting context neurons in the hippocampus with a delay of tens of milliseconds—the signature of Hebbian learning physically wiring associations.

- Context-cued retrieval: When a context cue appeared without any visual stimulus, it triggered the neurons for pictures previously paired with it, showing the brain retrieves memory through population reinstatement, not dedicated cells.

- Pattern separation, not retrieval: The handful of conjunctive neurons (1.61%) functioned as pattern separators, encoding single specific combinations rather than serving as memory retrieval units—favoring flexible recombination over pre-packaged storage.

Source: Nature (2026) | Bausch, Mormann et al.

An Elegant Paradox: Separate Populations for Unified Memory

This study involved 16 epilepsy patients undergoing presurgical evaluation, yielding recordings from 3,109 neurons across the medial temporal lobe. Patients saw pairs of pictures and answered comparison questions: “Which is bigger?” “Which is older?” “Which do you prefer?”

They had to remember both pictures and indicate which matched the rule. Content (which picture) and context (which rule) could be measured separately.

The result overturned assumptions. Most neurons fired selectively for items—not context. A neuron responding to a biscuit fired the same way whether the task was size comparison or aesthetic preference.

597 neurons showed pure item selectivity. Meanwhile, 200 other neurons tracked only the rule, firing whenever a specific question appeared, regardless of which pictures showed up. The architecture wasn’t merged—it was modular.

The Puzzle Revealed: Why Separate Systems Work Better Than Merged Ones

At first, this seems inefficient. Why not bundle items and contexts together like the rodent brain? If humans encoded every item-context pair as a separate neuron, you’d need astronomical numbers of cells.

Instead, only 50 conjunctive neurons emerged—just 1.61% of the population. That tiny fraction reveals human memory’s true design.

Consider the implications. A biscuit neuron fires in any context: size, age, or aesthetic comparison. The same visual concept reuses itself.

A context neuron fires for “bigger” whenever any pictures appear—the rule reuses across items. This separation enables generalization along each dimension independently. You recognize a friend in a new context because the friend-neuron fires, independent of setting.

You apply a rule learned once to infinite new scenarios because rule-neurons activate wherever relevant, independent of the items they initially paired with.

The hippocampus and parahippocampal cortex showed telling specialization. These regions concentrate context-sensitive neurons while item-selective neurons scatter more widely. Context has a specialized neural home, and the brain recruits these dedicated circuits on demand.

How Two Separate Systems Become One Memory

With items and context encoded separately, the brain faces a binding problem: how does it combine them into unified memory? Three binding mechanisms work simultaneously:

- Coordinated Population Firing: When a picture appeared in a specific task context, the stimulus neuron and context neuron fired together—spikes synchronized. Synchronized activity was stronger when subjects answered correctly (p < 0.001). The brain uses population-level coordination, not dedicated conjunctive cells.

- Real-Time Synaptic Modification: After a picture paired with a context for just a few trials, the temporal relationship shifted. Entorhinal stimulus neurons began firing slightly before hippocampal context neurons—predicting them with tens of milliseconds delay. This is the signature of Hebbian plasticity: one neuron strengthening its synapse with another through repetition. Within minutes, the brain rewired connections between stimulus and context populations, forming associations dynamically during the task itself.

- Context-Cued Reinstatement: When a question appeared before any pictures, context neurons activated, then stimulus neurons for pictures previously linked with that context also fired—even without visual input. The context alone retrieved associated items. This was flexible: the same picture neuron reactivated for multiple contexts, and the same context neuron reactivated multiple pictures.

[Insert image: diagram showing separate populations of stimulus-selective and context-selective neurons across hippocampus, parahippocampal cortex, and entorhinal cortex]

When Neuron Count Reveals Neuron Function

The rarity of conjunctive neurons tells a deeper story. The human brain invested in largely orthogonal populations—separate spaces for items and contexts linked through coordination and plasticity, not pre-coded conjunctions. This is a design choice, not a limitation.

Rigidly conjunctive encoding would make certain operations easy but others impossible. Imagine recognizing your friend’s face only if that neuron fired in the restaurant where you first met. Imagine applying a mathematical principle only in the classroom where you learned it.

The human brain opted for flexibility over speed, generalization over detail-specificity.

Stimulus information flows through sensory regions into the entorhinal cortex, then to the hippocampus where context information already resides. Stimulus signals “gate” through context-sensitive hippocampal circuits. Synaptic plasticity cements associations in real time.

Instead of needing thousands of dedicated neurons for thousands of combinations, the brain achieves flexibility with two populations, plasticity, and real-time coordination across separated neural spaces.

A Uniquely Human Solution to Memory’s Central Problem

This architecture solves the same binding problem as rodent conjunctive encoding. The human brain uses populations, plasticity, and time instead of single neurons.

It buys flexibility over speed, generalization over detail-specificity—recognizing a face across contexts, applying rules across scenarios, imagining novel combinations. Memory becomes less like a filing cabinet and more like an orchestra. Neurons play independently, but their coordination creates memory.

Key implications:

- Rodent model doesn’t scale: The finding challenges a decades-old assumption that scaling rodent memory circuits to humans would work.

- Fundamental reorganization: The human medial temporal lobe reorganized fundamentally, maintaining separate representational spaces linked through real-time coordination and plasticity, not pre-coded conjunctive cells.

- Flexibility through modularity: This modular design allows human memory to be simultaneously specific about context and flexible across contexts—a balance impossible with rigid conjunctive encoding.

Citation: Bausch M, Niediek J, Reber TP, Mackay S, Boström J, Elger CE, Mormann F. Distinct neuronal populations in the human brain combine content and context. Nature. 2026;650:690–696. DOI: 10.1038/s41586-025-09910-2

Authors: Department of Epileptology, University Hospital Bonn, Bonn, Germany; Machine Learning Group, Technische Universität Berlin, Berlin, Germany; Faculty of Psychology, UniDistance Suisse, Brig, Switzerland; Department of Neurosurgery, University Hospital Bonn, Bonn, Germany.