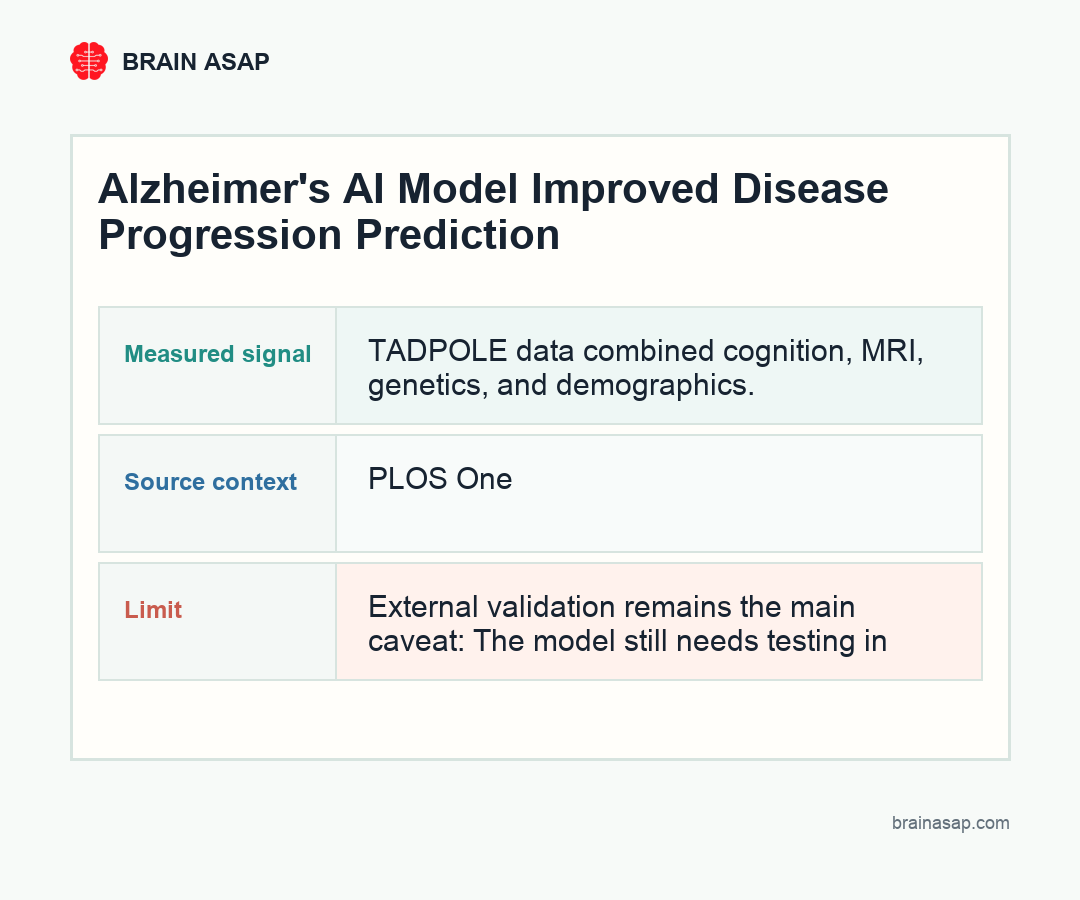

TL;DR: A 2026 machine-learning study found that the final SNP-NF model in PLOS One reported mAUC 0.965, recall 0.929, and precision 0.929, while the study reported gains of about 3% in mAUC, 1% in precision, and 0.7% in recall versus their previous neural-process model.

Key Findings

- SNP-NF performance was high: The final model reported mAUC 0.965, recall 0.929, and precision 0.929 on the TADPOLE benchmark.

- Normalizing flows added a measurable gain: Researchers reported about 3% higher mAUC, 1% higher precision, and 0.7% higher recall compared with their previous neural-process model.

- TADPOLE supplied multimodal inputs: The model used cognitive, neuroimaging, genetic, and demographic features from the Alzheimer’s Disease Prediction of Longitudinal Evolution dataset.

- Benchmark performance was the claim: The study supports stronger disease-progression modeling, not a ready clinical diagnostic tool.

- External validation remains the main caveat: The model still needs testing in independent cohorts with different scanners, missing-data patterns, and clinical workflows.

Source: PLOS One (2026) | Al-anbari et al.

Alzheimer’s disease progression is difficult to model because patients do not move through cognitive stages at the same speed. Some remain stable, some convert from mild cognitive impairment to dementia, and many have incomplete measurements across visits.

The study tested an AI approach that combines neural processes with normalizing flows. Neural processes help represent uncertain patient trajectories, while normalizing flows help model complex probability distributions.

Core result: this is a model-performance claim, not a diagnosis claim: the final SNP-NF model reported mAUC 0.965, recall 0.929, and precision 0.929. That means the algorithm performed strongly on the TADPOLE progression task, while clinical value still depends on testing outside that benchmark.

TADPOLE Data Fed the Alzheimer's AI Model

Design: a multimodal machine-learning study using the Alzheimer’s Disease Prediction of Longitudinal Evolution dataset. Sample: TADPOLE longitudinal data with cognitive, neuroimaging, genetic, and demographic features.

TADPOLE is a structured Alzheimer’s disease benchmark with repeated clinical, imaging, and genetic inputs. This makes it well suited for comparing models, but it is still a curated dataset rather than a live neurology clinic.

- Cognitive data: Clinical and neuropsychological scores described current function.

- Imaging data: Brain measures supplied structural disease information.

- Genetic data: Risk-linked features helped describe background susceptibility.

- Demographics: Age and related variables anchored the trajectory model.

Normalizing Flows Modeled Complex Alzheimer Trajectories

The main result belongs in that benchmark context: mAUC 0.965, recall 0.929, and precision 0.929. Those numbers suggest strong multi-class prediction inside the reported dataset, not automatic readiness for clinical triage.

The comparison with the group’s earlier neural-process model is the practical increment. A roughly 3% mAUC gain is not a brand-new diagnostic standard, but it does suggest that normalizing flows helped model more complex disease trajectories.

Why normalizing flows matter: Alzheimer progression data are irregular. Patients can miss visits, convert at different speeds, and show different combinations of cognitive, imaging, and genetic information.

- Neural process component: The model can represent patient trajectories with uncertainty instead of forcing every patient into one fixed disease path.

- Normalizing-flow component: The added flow layer helps model more complex probability distributions, which is useful when clinical and imaging variables do not change linearly.

- SNP component: Genetic features add background risk information, but they work as part of the multimodal model rather than as standalone predictors.

- Benchmark boundary: TADPOLE is useful for comparing algorithms, but real clinics have noisier follow-up, scanner variation, and missing fields.

Measurement detail: For a general audience, the safest way to read the figure is as a performance comparison. mAUC reflects multi-class discrimination, while recall and precision show how well the model captured cases without overcalling them.

SNP-NF improved Alzheimer’s progression prediction in the TADPOLE benchmark dataset. External cohorts, missing-data patterns, and real clinical workflows are the next pressure tests.

The anchor is the final SNP-NF model, which reported mAUC 0.965, recall 0.929, and precision 0.929. The added layer is the study reported gains of about 3% in mAUC, 1% in precision, and 0.7% in recall versus their previous neural-process model.

A stronger version of the evidence would test the same model before the conclusion is used in a broader clinical or public-health frame.

Clinical value still depends on external validation outside the benchmark dataset. That limit does not weaken the finding; it tells readers exactly where the current evidence stops.

Treat the reported mAUC, recall, and precision as evidence of model performance inside TADPOLE.

Test the same model on external cohorts with different data quality and follow-up patterns. Until then, the most accurate interpretation stays specific: what was measured, where it was measured, what changed, and what still needs confirmation.

SNP-NF Reached mAUC 0.965

For a general audience, the safest way to read the figure is as a performance comparison. mAUC reflects multi-class discrimination, while recall and precision show how well the model captured cases without overcalling them.

The evidence supports a narrow interpretation: SNP-NF improved Alzheimer’s progression prediction in a benchmark dataset. External cohorts, missing-data patterns, and real clinical workflows are the next pressure tests.

The genetic component also matters because the model combined SNP information with longitudinal disease features. That is different from claiming a single genetic variant can predict progression on its own.

External Alzheimer Validation Is Still Needed

Main limitation: clinical value still depends on external validation outside the benchmark dataset.

- Benchmark dataset: TADPOLE supports model comparison, but it is not the same as everyday clinic data.

- Calibration: Predicted probabilities need to match observed outcomes.

- Missing fields: Real records may lack imaging, genetics, or repeated visits.

- Workflow: A model should expose uncertainty rather than hide it.

The caveat is practical: model accuracy can fall when the population, scanner mix, data completeness, or diagnostic labels change. A high benchmark score is encouraging, but it is not the same as clinical deployment.

Alzheimer's AI Should Support Clinical Judgment

The best reading is that SNP-NF improved an Alzheimer’s progression model in a formal benchmark.

- Best use: Treat the reported mAUC, recall, and precision as evidence of model performance inside TADPOLE.

- Do not overread: Do not read the result as a ready-made clinical diagnostic tool.

- Next question: Test the same model on external cohorts with different data quality and follow-up patterns.

That keeps the interpretation grounded: stronger AI prediction, with validation still doing the heavy lifting.

Citation: DOI: 10.1371/journal.pone.0345958; Al-anbari et al.; Normalizing flow based neural processes for Alzheimer's disease progression prediction; PLOS One; 2026.

Study Design: A multimodal machine-learning study using the Alzheimer’s Disease Prediction of Longitudinal Evolution dataset.

Sample Size: TADPOLE longitudinal data with cognitive, neuroimaging, genetic, and demographic features.

Key Statistic: The final SNP-NF model reported mAUC 0.965, recall 0.929, and precision 0.929.

Caveat: Clinical value still depends on external validation outside TADPOLE, especially in cohorts with different scanners, missing-data patterns, diagnostic labels, and follow-up schedules.